Most teams asking how to show up in Google AI Mode are solving the wrong problem.

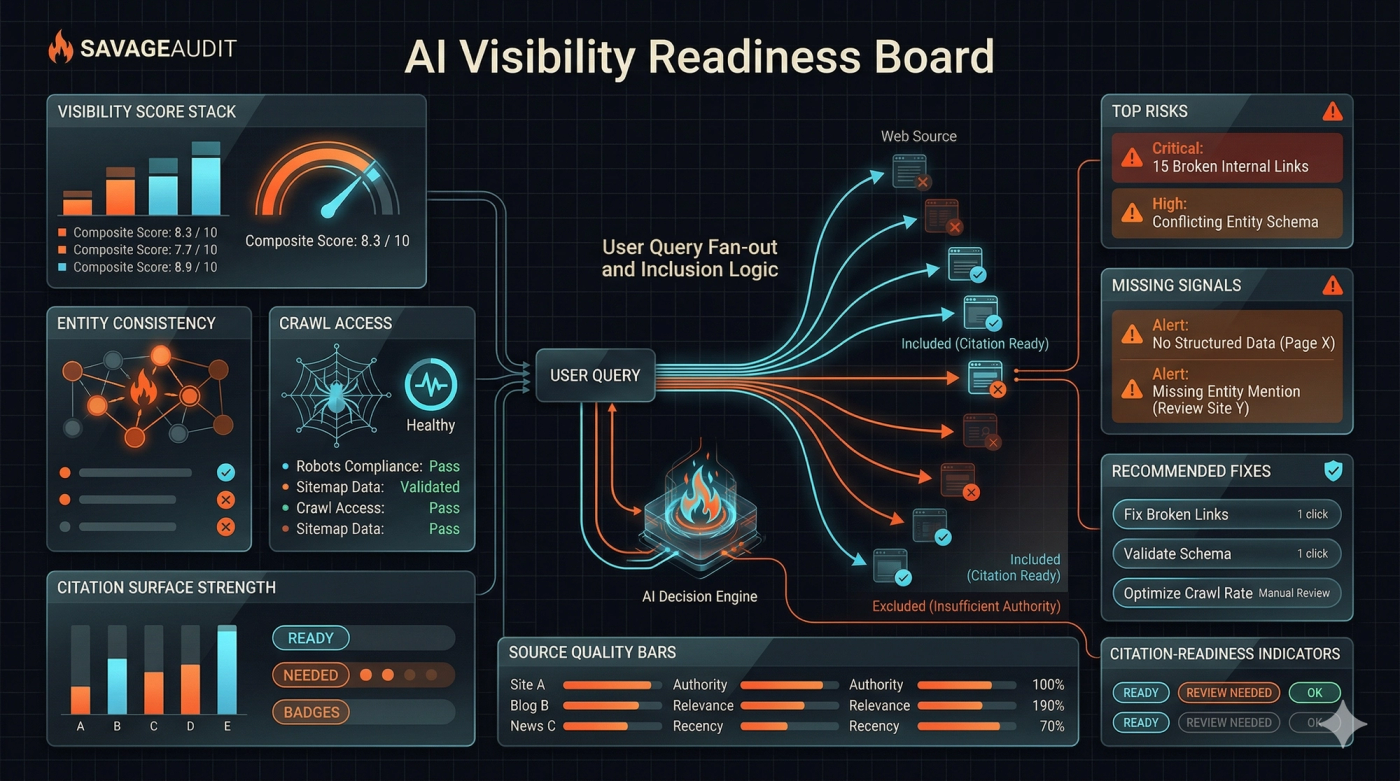

They obsess over whether there is a secret AI Mode trick, a hidden schema type, or some new machine-readable file that magically gets a page cited. Google's own documentation points in the opposite direction. AI features still rely on core search foundations, and AI Mode can use query fan-out to retrieve a wider set of supporting pages than a normal one-shot search. That means the real question is not "How do I hack AI Mode?" It is "Does my site stay legible when one user question turns into many retrieval paths?" See Google's guidance on AI features and your website and the product explanation in AI Mode in Google Search: Updates from Google I/O 2025.

If you want a fast way to score that legibility, start with SavageAudit's AI visibility audit. If you want the short version first, here it is: AI Mode is more likely to surface pages that are crawlable, snippet-eligible, tightly scoped, text-rich, well-supported by public evidence, and easy to connect to a clear entity.

What Google has actually said

Google has been more explicit about AI search than most people realize.

The official documentation says AI Overviews and AI Mode surface relevant links, that the same SEO best practices still apply, and that there are no extra technical requirements or special optimizations required just to appear. The same guide also says pages must be indexable and eligible to show snippets. In plain English: if your content is weak in classic search fundamentals, AI Mode does not rescue it. It amplifies the consequences. See AI features and your website, Google Search Essentials, and the in-depth guide to how Search works.

Google also says AI Mode uses query fan-out, which means one complex prompt can be broken into multiple subtopic searches behind the scenes. That is the part most teams miss. Your page is not only competing for one broad head term. It may be retrieved through a narrower question, a supporting comparison, a definitions query, a trust query, or an evidence query created during that fan-out process. See AI Mode in Google Search: Updates from Google I/O 2025.

| Google guidance | What it means in practice |

|---|---|

| AI Mode and AI Overviews surface relevant links | Your page has to be retrievable as a useful source, not just rank somewhere |

| Query fan-out expands a question into subtopics | Narrow supporting pages often matter more than one giant generic page |

| No special AI-mode-only optimization is required | Do not waste time inventing fake AI SEO rituals |

| Pages must be indexable and snippet-eligible | Blocked, thin, or snippet-restricted content loses surface area fast |

| Existing SEO best practices still apply | Crawlability, internal links, text clarity, structured data, and page quality still do the heavy lifting |

Why supporting links are won before the final answer is assembled

This is the operational shift.

In classic SEO, teams often think in terms of ranking one page for one query. In AI Mode, the retrieval path is broader. A strong page can still lose if it fails one of the smaller tests that happen during fan-out.

For example, a homepage might rank for a brand term but still fail to become a supporting link for a high-intent AI Mode question if it does not answer a concrete subtopic cleanly. A long product page might rank for a category term but get skipped if the useful answer is buried under vague claims, weak headings, and no extractable proof. A thought-leadership page might be well written and still get ignored if the brand entity behind it is thin across the public web.

That is why an AI visibility audit should not stop at "Are we indexed?" It should ask whether the site survives decomposition.

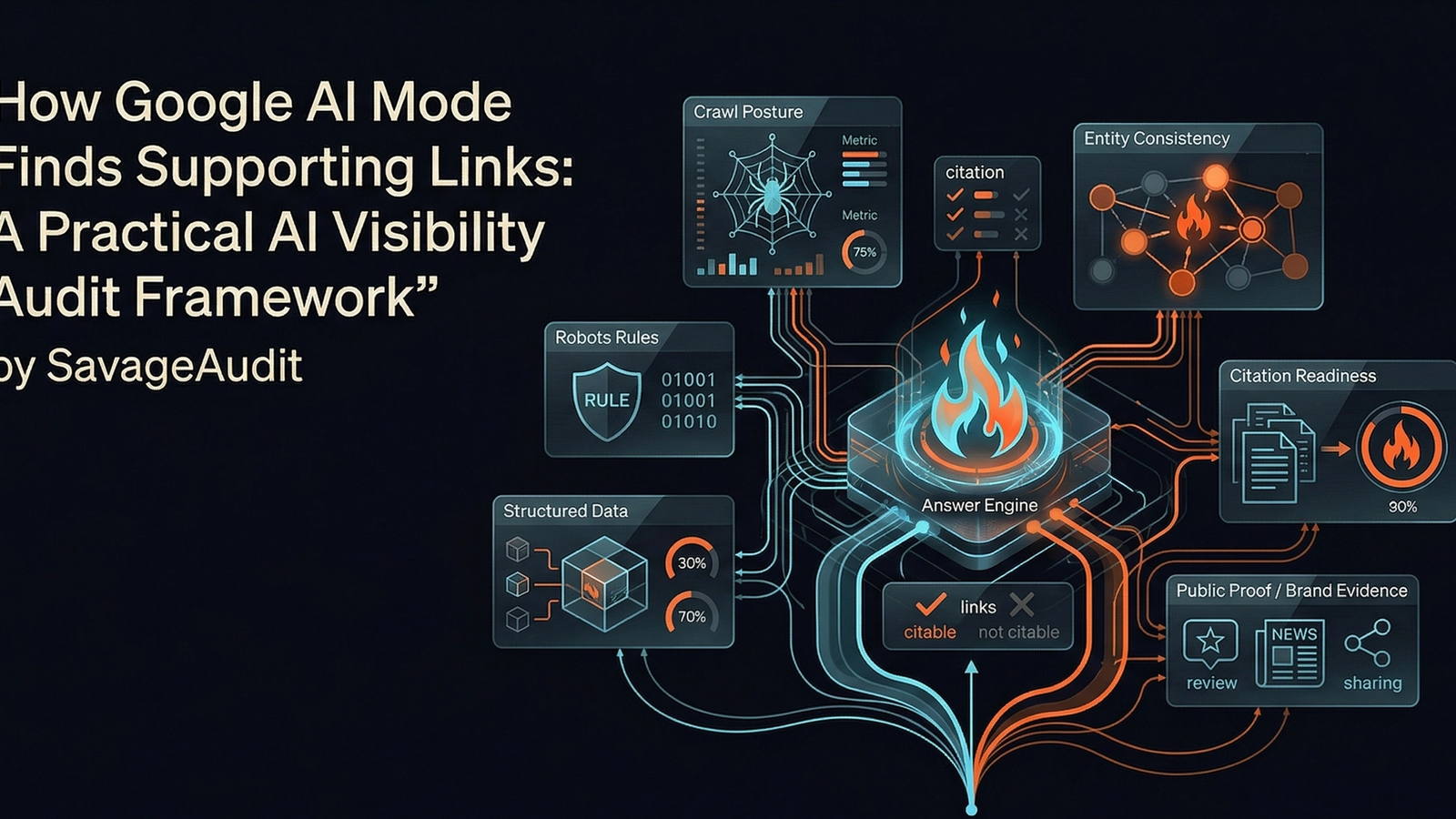

The practical AI visibility audit framework

This is the framework we use to pressure-test whether a page can become a supporting link in AI search.

1. Crawl posture

Start with whether Google can reach and process the page reliably.

This sounds basic because it is basic. But basic failures still kill AI visibility first. Google explicitly recommends making sure robots.txt, CDN rules, and hosting infrastructure allow crawling. Internal links should also make content easy to discover. See AI features and your website.

Check:

- Is the page indexable and not blocked by robots, auth walls, or accidental noindex rules?

- Are core text sections rendered in HTML instead of hidden behind fragile client-side behavior?

- Does the page receive internal links from relevant category, feature, and blog pages?

- Can Google discover supporting assets like images and videos if they matter to the answer?

If this layer is weak, stop here and fix it before pretending the problem is "AI search."

2. Snippet and extractability readiness

Google says pages need to be eligible to show snippets. That matters because AI Mode does not cite pages it cannot safely or clearly extract from.

Check:

- Are the strongest claims present as visible text, not just inside images or interactive widgets?

- Does each section answer one clear question with one clear scope?

- Are headings specific enough to stand alone when retrieval narrows to a subtopic?

- Do paragraphs get to the point fast, or do they drown the answer in brand filler?

Bad extractability usually looks like this:

- vague hero copy

- giant paragraphs with no scannable structure

- proof hidden three scrolls down

- FAQ sections written like marketing scripts instead of actual answers

If a model has to work too hard to find the usable sentence, another page wins.

3. Entity clarity

AI Mode does not only retrieve text. It also has to understand who is speaking.

Google's structured data documentation explains that structured data helps Google understand page content and information about entities in the world more generally. That does not mean schema alone gets you cited. It means clean entity signals reduce ambiguity. See intro to structured data.

Check:

- Is the brand name written consistently across the site?

- Are company, product, and author identities cleanly separated?

- Do About, Contact, product, legal, and profile pages reinforce the same entity?

- Is structured data present where it is appropriate, and does it match visible content?

This is where SavageAudit's internet and social presence audit matters. If the open web has weak or inconsistent public signals around your brand, AI systems have less confidence in connecting your content to a trustworthy source.

4. Evidence density

A surprising amount of AI visibility loss is really proof loss.

When Google says AI features help people find relevant links quickly and reliably, the reliability part matters. A page with claims but no evidence is harder to trust as a supporting source than a page with examples, specific numbers, concrete comparisons, or public references.

Check:

- Are important claims backed by data, examples, screenshots, or named references?

- Do comparison pages explain trade-offs instead of just declaring victory?

- Do category pages include definitions, methodology, and real constraints?

- Is there any independent public footprint reinforcing the same claims?

This is one reason thin SaaS copy struggles in AI search. It is not just generic. It is unsupported.

5. Answer-surface coverage

Query fan-out rewards coverage across subtopics, not just one polished pillar page.

A lot of teams publish one giant page and expect it to do everything: educate, convert, rank, define terms, compare options, and capture AI citations. Usually it does none of that well.

Instead, map your coverage like this:

- Core commercial page

- Supporting explainer

- Methodology page

- Comparison page

- Proof or research page

For SavageAudit, that is exactly why the site has separate commercial surfaces like AI visibility audit, website audit categories, and full-site audit, with the blog handling research and supporting education instead of trying to impersonate a landing page.

6. Internal routing for fan-out paths

Supporting links are often won by pages that the site itself makes easy to contextualize.

If your internal linking only points everything back to the homepage, you are starving the retrieval graph. Google explicitly recommends making content easier to discover through internal links. See AI features and your website.

Check:

- Does the main commercial page link to supporting educational pages?

- Do blog posts link back to the relevant commercial surface?

- Do related pages connect adjacent intents instead of living in silos?

- Can a crawler understand which page is the best answer for each narrow topic?

Internal linking is not just an SEO housekeeping task anymore. It is how you tell a retrieval system where supporting evidence lives.

7. People-first usefulness

This is the boring answer that keeps being the right answer.

Google's people-first content guidance is still the cleanest filter for AI visibility work. If the page exists mainly to harvest search demand without adding real value, it may still get indexed, but it is a weaker candidate for citation and support. See Creating helpful, reliable, people-first content and the SEO Starter Guide.

Check:

- Would the page still be useful if search traffic disappeared tomorrow?

- Is the page written by someone with real experience or just assembled from generic summaries?

- Does it answer the next obvious question, not just the first one?

- Does it include original framing, examples, data, or methodology?

If the answer is no, the page may survive basic indexing but fail higher-trust retrieval.

What an AI-Mode-ready page usually looks like

The pattern is not mysterious.

| Weak page | Strong page |

|---|---|

| Broad headline with no concrete scope | Specific headline tied to one clear user question |

| Claim-heavy copy with no proof | Claims backed by examples, numbers, or references |

| Generic sections like "Why choose us" | Sections that answer distinct subtopics directly |

| One page trying to cover everything | Clear page roles across commercial, educational, and proof content |

| Inconsistent brand/entity signals | Consistent brand naming and supporting public footprint |

| Poor internal linking | Obvious links between page clusters and evidence sources |

A simple audit workflow for teams

If you want to turn this into work instead of theory, use this order.

- Pick one page that should plausibly appear as a supporting link for a real AI search question.

- Write down the likely fan-out subquestions behind that prompt.

- Score the page for crawlability, extractability, entity clarity, evidence density, and internal routing.

- Identify the missing support pages instead of forcing everything into one URL.

- Tighten the proof layer and visible answer blocks first.

- Re-check how the page connects to adjacent pages in the same topic cluster.

If you want that compressed into one workflow, use SavageAudit's AI visibility audit for the AI-search layer and website audit categories for the broader six-category review.

The real mistake teams make

They treat AI Mode like a new channel with new magic rules.

It is closer to this: classic search fundamentals plus stricter pressure on structure, clarity, and support. Query fan-out means weak pages get exposed faster because the system has more ways to bypass them.

So if you are asking why Google AI Mode is not citing your website, do not start with speculative hacks. Start with a harder question: when Google breaks a user question into smaller retrieval jobs, do you still have the best page in the room?

That is the whole game.

Common questions

Does Google AI Mode need special schema or a new AI metadata file?

No. Google's documentation says there are no extra technical requirements or special optimizations required specifically for AI Overviews or AI Mode. Core SEO, snippet eligibility, and technical accessibility still matter most.

Why can a page rank in normal search and still fail to show up as a supporting link in AI Mode?

Because AI Mode can use query fan-out. A page may be relevant to a broad query but still lose narrower supporting retrieval jobs if it lacks clear answer blocks, proof, entity clarity, or internal discovery paths.

What is the first thing to fix if our site is weak in AI search visibility?

Fix crawlability and extractability first. If Google cannot reliably access the page, process the visible text, or use the page as a valid snippet source, the higher-level AI visibility work is mostly theatre.

Is AI visibility different from SEO?

It is different in emphasis, not in foundations. AI visibility puts more pressure on citability, extractable answers, entity consistency, and evidence density, but it still sits on top of standard search requirements.