If your team is shipping llms.txt because it feels like the AI-era version of robots.txt, stop for a minute.

These three things do not solve the same problem.

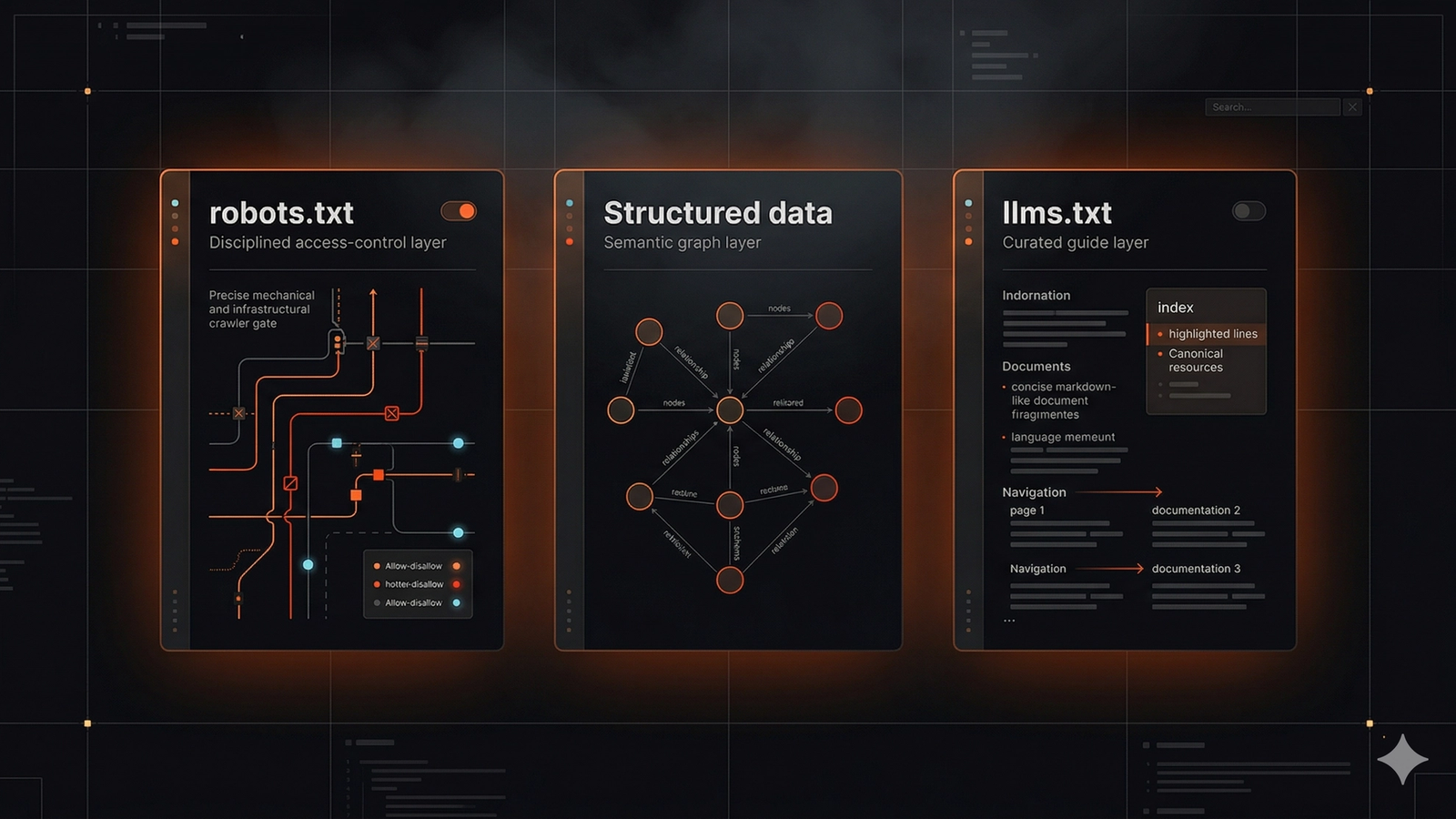

robots.txt controls crawler access. Structured data helps machines interpret entities and page meaning. llms.txt is a proposed convenience file that can point language models to the most useful documentation and reference pages if they choose to read it. That is a very different job from crawl control or semantic markup. See AI features and your website, Google Search Essentials, intro to structured data, and the proposed llms.txt specification.

The big mistake is treating them like substitutes.

Google's own documentation is still the cleanest anchor here: AI features do not require a special AI-only technical setup, and pages still need to be crawlable, indexable, and snippet-eligible. That means most AI discovery wins still come from classic technical hygiene plus clearer page structure. If you want the broader audit layer, that is exactly what SavageAudit's AI visibility audit is built to pressure-test.

The short answer

Here is the clean version.

| Layer | Primary job | What it helps with | What it does not do |

|---|---|---|---|

| `robots.txt` | Controls crawler access at the path level | Allows or blocks crawling of files, directories, and sections | It does not explain meaning, quality, or preferred citations |

| Structured data | Adds machine-readable context to page content | Helps systems understand entities, relationships, and page types | It does not grant automatic rankings or AI citations |

| `llms.txt` | Offers a curated map of important docs for AI systems that choose to read it | Can reduce friction on large docs-heavy sites | It does not replace crawlability, indexability, or strong page content |

If your site has crawl issues, poor extractability, thin content, or weak entity signals, llms.txt will not rescue it.

What robots.txt actually does

robots.txt is still the access-control layer people most often misunderstand.

Its job is simple: tell compliant crawlers which paths they may or may not crawl. It matters because blocked sections cannot become usable discovery surfaces for search systems that honor those rules. If you accidentally block product directories, comparison pages, or supporting resources, you reduce the content available for search features and AI retrieval.

That matters even more when AI systems rely on underlying search infrastructure or the public web graph to discover source material.

What robots.txt is good for:

- keeping low-value crawl traps out of the crawl budget

- blocking private or duplicate path patterns when appropriate

- making sure assets and pages needed for rendering are not accidentally denied

What robots.txt is not good for:

- asking to be cited more often

- describing what your company does

- highlighting the best explanation page for a concept

- proving trust, originality, or expertise

If your AI discovery strategy starts and ends with robots.txt, you are solving the wrong layer.

What structured data actually does

Structured data is about machine-readable meaning, not permission.

Google's documentation is explicit that structured data helps Google understand page content and information about entities more generally. That is useful because AI retrieval systems work better when the page clearly expresses what it is, who published it, and how key entities relate to each other. See intro to structured data.

In practice, structured data helps with things like:

- clarifying that a page is an article, FAQ, product, organization, or breadcrumb path

- reinforcing brand, author, and company identity

- making page relationships cleaner for systems that parse entities

But teams regularly over-attribute what it can do.

Structured data does not turn vague marketing copy into a trustworthy answer. It does not override weak visible content. It does not make a thin comparison page magically become the best source for a nuanced question. It is an amplifier for clarity, not a substitute for clarity.

The easiest way to think about it is this: structured data helps machines interpret the page you already wrote. It does not write a better page for you.

What llms.txt actually does

llms.txt is best understood as an optional guidance file, not a discovery guarantee.

The proposed format gives site owners a place to list important documentation, canonical resources, and concise summaries for language models or assistants that choose to fetch the file. On a large docs site, that can be useful. On an API platform, a standards site, or a research-heavy knowledge base, it can reduce ambiguity about which pages matter most.

That is the upside.

The downside is that a lot of teams are treating llms.txt like a new ranking primitive when there is no strong evidence for that. Google's documentation does not list llms.txt as a requirement for AI features, and recent third-party analysis has found low adoption with no consistent proof of citation lift so far. See AI features and your website and LLMs.txt Does Not Boost AI Citations, New Analysis Finds.

That does not mean llms.txt is useless.

It means you should place it in the right order of operations.

Why teams confuse these three layers

The confusion usually comes from one of three assumptions.

First, teams assume every machine-readable file is a discovery signal.

Second, they assume AI systems need a brand-new access standard separate from search fundamentals.

Third, they assume that because llms.txt sounds new, it must be where the competitive edge lives.

But the actual retrieval chain is less glamorous than that.

If a page is blocked, weak, vague, snippet-restricted, or unsupported by evidence, that weakness shows up whether or not you publish a helpful text file at the root of the site. The boring fundamentals still decide most of the game. That is why the right comparison is not "Which one should I use?" It is "Which problem am I solving right now?"

What actually helps AI discovery in 2026

If your goal is to show up more reliably in AI search and AI-assisted answers, prioritize these layers first.

1. Crawlability, indexability, and snippet eligibility

Google says AI features depend on the same fundamental requirements as broader Search visibility. Pages need to be crawlable and eligible to show snippets. See AI features and your website.

That means you should verify:

- no accidental

noindexor blocked paths on key pages - no broken canonicals pointing authority elsewhere

- no fragile render-only text hidden behind brittle client-side execution

- no snippet restrictions that kneecap the page's usefulness as an answer source

This is basic, but basic failures still cause disproportionate damage.

2. Extractable page structure

AI discovery is not only about whether a page exists. It is also about whether the page can be used.

That means:

- a heading should describe a narrow question clearly

- the next paragraph should answer that question fast

- proof should be visible in text, not buried in screenshots alone

- internal links should connect the page to adjacent concepts and evidence

If a model or search system has to excavate the useful answer out of vague copy, your page loses to a cleaner source.

3. Entity clarity

Machines need to understand who is speaking, not just what is being said.

Your company name, product names, author identity, About page, Contact page, legal pages, and structured data should reinforce the same entity story. If your brand is inconsistent across the site and the wider web, retrieval confidence drops.

That is one reason SavageAudit pairs the technical layer with an internet and social presence audit. AI discovery is not just a file problem. It is also a public-presence problem.

4. Evidence density

Citable content tends to be specific content.

The strongest pages usually have:

- concrete examples

- clear comparisons

- named methods

- screenshots or product references backed by visible text

- real constraints instead of generic claims

If a page says "we are the best" but cannot show how, the technical wrapper around it does not matter much.

5. Internal routing across topic clusters

AI systems do not only land on homepages and sales pages.

They often surface supporting pages that answer narrower subquestions. That means your site architecture needs to route crawlers and readers toward those pages cleanly. Comparison pages, methodology pages, glossaries, FAQ hubs, and blog explainers often do a lot of the heavy lifting here.

If your internal linking is weak, the site becomes harder to interpret as a connected knowledge graph.

So where does llms.txt fit?

After those layers.

Not before them.

When llms.txt is worth implementing

There are real cases where it makes sense.

llms.txt is usually worth the effort when:

- your site has a large developer docs surface

- your product has API references, SDK guides, and fragmented help content

- your team wants one obvious machine-readable starting point for canonical resources

- you can maintain the file as the docs set evolves

In those cases, the file can act like a curated map. That is useful because many documentation libraries are sprawling, repetitive, and hard to traverse from the outside.

But even then, the file should point to pages that are already good.

If the destination pages are weak, the map is just a faster route to weak material.

When llms.txt is a distraction

It is usually a distraction when:

- your homepage still has vague messaging

- your key pages are not well linked internally

- your brand signals are inconsistent across the site

- your structured data is missing on pages where it is obviously appropriate

- your content library is tiny and easy to navigate already

- your team has no process to maintain the file after launch

For most startups and service sites, the first hour spent on llms.txt is less valuable than the first hour spent tightening titles, fixing crawl rules, improving answer blocks, or strengthening proof.

A simple decision rule

Use this rule before you add llms.txt.

If you cannot confidently answer yes to these five questions, do not treat llms.txt as the next priority.

- Are our core pages crawlable and indexable?

- Can the best answer on each page be extracted quickly from visible text?

- Are our company and product entities consistent across site pages and the wider web?

- Do we have clear internal links between commercial pages, support pages, and evidence pages?

- Do we actually have enough documentation complexity to justify a machine-readable guide file?

If the answer to question five is no, the file may be mostly symbolic.

The practical priority stack for AI discovery

If you want a cleaner operating order, use this one.

| Priority | Focus | Why it comes first |

|---|---|---|

| 1 | Crawlability and snippet eligibility | No access means no discovery surface |

| 2 | Strong page structure and visible answers | Retrieval systems need usable answer blocks |

| 3 | Structured data on the right page types | Helps interpretation and entity clarity |

| 4 | Internal linking and topic coverage | Improves discovery paths and supporting-link depth |

| 5 | Public entity consistency | Reinforces trust in who is speaking |

| 6 | `llms.txt` | Optional guidance layer after the real work is done |

That order is much closer to how real visibility gains are won.

What to do next

If you are auditing AI discovery right now, do not ask "Should we add llms.txt?" as the first question.

Ask these instead:

- Which pages should plausibly be cited for our core questions?

- Can a crawler reach them cleanly?

- Can a model extract a direct answer from them fast?

- Do those pages reinforce a consistent brand and entity story?

- Do they link to adjacent evidence and support pages?

That is the higher-leverage sequence.

If you want help diagnosing that sequence, start with SavageAudit's AI visibility audit. If your bigger issue is that your public footprint is thin or inconsistent, the better starting point is internet and social presence audit.

llms.txt may still be worth adding later.

It just should not be the thing you hide behind while the core site is still unreadable.

Common questions

Does Google require `llms.txt` for AI Overviews or AI Mode?

No. Google's current documentation says AI features do not require special AI-only technical setup beyond normal Search requirements.

Can `llms.txt` replace `robots.txt`?

No. robots.txt governs crawler access. llms.txt is an optional guide file for systems that choose to read it. They solve different problems.

Does structured data guarantee better AI citations?

No. Structured data can improve machine understanding, but it does not override weak content, weak trust signals, or weak page structure.

If I only do one thing for AI discovery this quarter, what should it be?

Improve the pages you actually want cited. Make them crawlable, snippet-eligible, clearly structured, well linked, and supported by specific evidence.